Having 100% of LOC covered by unit tests certainly feels like a great achievement. But beware – that doesn’t necessarily mean your code is perfectly covered. Lines of code coverage is a really nice indicator of your app’s stability, but is can also hide some risks.

Cyclomatic complexity

Let’s say you have simple method to test:

public function calculateStuff(): int

{

if ($this->debug) {

// do some stuff

return $result;

}

return 0;

}

How many test cases should you write for it? Obviously, two: one to check for a valid output if debug is true, and one when it’s false. If you only write one of them, LOC coverage will make it clearly visible that something is missing. But what if your code looks like this?

public function calculateStuff(): int

{

return $this->debug ? $this->calculateResult() : 0;

}

You still have exactly the same two logic paths, but only one line of code. Testing any of those paths will result in the entire method being “covered”.

And what about this code?

public function onException(ExceptionEvent $event):

{

if (!$this->debug) {

$event->setResponse($this->renderErrorPage());

}

}

If you first test the case when $debug === false, then grab some lunch, and then come back not remembering the details, but seeing a 100% coverage – will you remember to also test the other case?

Summing up: having the tests execute every single line of your code still does not guarantee that all the logical paths of your class are being executed.

Missing assertions

Also: having all the logical paths executed doesn’t necessarily mean you got them tested.

class Foo

{

public function run($input)

{

// lots of complex logic

// write the result to a file

return $output;

}

// lots of private methods

}

class FooTest extends TestCase

{

public function testRun()

{

$foo = new Foo;

$this->assertEquals(8, $foo->run(123));

// other, similar test cases

}

}

It’s very possible this test will cover every single line of those lots of complex logic. But what about the side effects? We are writing something to a file after all (a log maybe?). We are testing that the output is correct for a given input, but we forgot to test that the side effects are also working as expected.

The nicest would be, of course, to avoid any side effects and code more functional-programming-style, but that might not always be doable easily enough. So the second best thing would be this: don’t trust your coverage, check if what was covered, was also tested.

Leaky coverage

It’s virtually impossible to mock all the dependencies away. Well, ok, it’s possible, but sometimes it’s more effort than it’s worth. Most of the time when you’re testing class X, some lines of classes Y and Z will also get executed. Usually, it’s harmless. But sometimes it could blow up in your face – you could get the entire class Y “covered” without actually testing it. It’s then very tempting to just leave it as it is (100% achieved, why would I even bother?) and very simple to just miss it.

That’s why it’s a great habit to tell the testing framework, what are you testing with this specific case. In PHPUnit you can do it using the @covers annotation. If you specify that XTest @covers X, PHPUnit will still execute the code of Y and Z, but will not count that as a coverage of Y and Z.

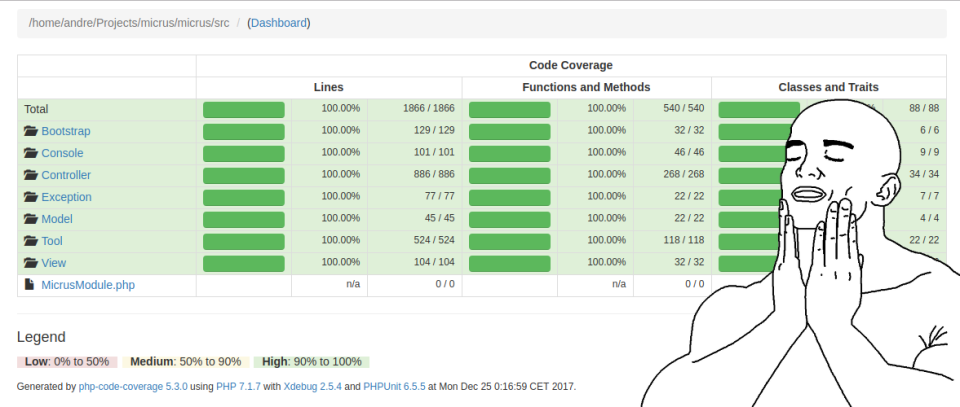

Achieving 100%

Let’s not kid ourselves – the test cases for more complex applications are never perfect in the sense of covering every single scenario that your code might run into. It’s just not worth the effort, “good enough” is usually good enough.

Still, having high coverage is a good indicator. The higher it is, and the more meaningful it is (in terms of what I wrote above) – the safer you can feel when refactoring your code, or modifying it in any other way.

But getting to 100% is not easy.

Testable code

Follow clean code rules and use design patterns. If you repeat yourself ( DRY), you’ll have to repeat the tests as well. If your class has multiple responsibilities ( SRP), it becomes considerably more difficult to test it. Testing (A+B) together can sometimes be way way harder than testing A + testing B.

Use inversion of control, like DI. If your class creates its dependencies or fetches them as a singleton, it’s almost impossible to reliably mock those dependencies away. If you just pass them in the constructor though – it’s an easy peasy to just pass a mock instead of an actual object.

The simpler your code, the easier it is to test it. When writing tests for Micrus v4.0, I was surprised how much has my coverage grew not by adding new tests but by removing some not-so-useful, outdated or too complex code.

AspectMock

There is a really fancy and clever library for PHP, AspectMock, that uses the power of aspect oriented programming to make everything testable. Static methods, final classes, global system functions, dependencies created inside of the tested class – everything. It basically modifies the source code in run-time, letting you do anything with it.

Awesome as it may be, I’ve decided to move away from it. Micrus v4.0 doesn’t use it all, even though in v3.0 it was a basis for every single mock. Why?

It’s quite an overhead, both in terms of speed and the time spent to configure it (best case scenario is very simple, but as soon as anything is misconfigured, debugging becomes a nightmare).

But most importantly: it lets you get away with ugly code. Why would you care about passing the dependencies in the constructor, if AspectMock lets you mock all the anti-patterns you can think of? Why would you care about making your code testable (= simple), if everything is testable?

If you depend on a legacy code, AspectMock might be your only chance to test some parts of it at all. However, if you’re writing something modern – I’d recommend avoiding this kind of magic.

Alternatives

How to achieve 100% coverage without resolving to AspectMock or similar magic? I’ve got some advice more specific than just SOLID or DI (although they are essential).

Most importantly: mocking the global functions. That’s the feature for which I was considering staying with AspectMock after all. Then I found this article, showing how to achieve a similar result without that much overhead. It wasn’t easy to use and re-use though, so I decided to write a solution which is. That’s how Avris FunctionMock was born.

Basically it lets you write something like:

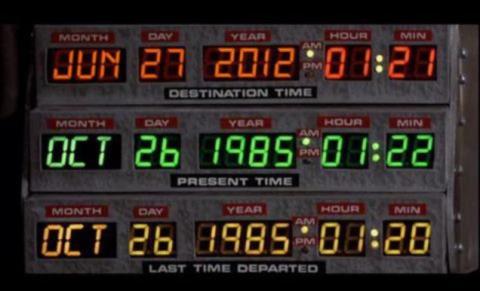

FunctionMock::create(__NAMESPACE__, 'time', 123456789);

and it will make all the calls to time() inside __NAMESPACE__ return 123456789 regardless of what the actual time is. You can also use a callback, disable a mock and validate how many times and with what arguments was the mock invoked.

When it comes to the issue of accessing private/protected methods/properties (which you shouldn’t do, but sometimes have to), reflections come to the rescue:

$r = new \ReflectionProperty(Application::class, 'commands');

$r->setAccessible(true);

$commands = array_keys($r->getValue($consoleApp));

$this->assertEquals(['help', 'list', 'test'], $commands);

or

$r = new \ReflectionMethod(FillEnvCommand::class, 'getDefaults');

$r->setAccessible(true);

$defaults = iterator_to_array($r->invoke($command));

If you’re being nice and keep your classes final (to enforce Composition over inheritance), you should also be nice and make them implement an interface and depend on that interface ( Dependency inversion principle). You can’t mock a final class – but you can mock that interface.

And when it comes to mocking static methods – if you need to mock them, you’re doing something wrong. Static methods should be avoided, except for things like factories etc.

Summing up

Having high code coverage is nice, but remember it might give you a false feeling of security. Don’t rely simply on that one number. And that’s basically all I wanted to say

Avris

Avris

Avris

Avris